The Algorithmic Bias of Pakistani Content: Shaping Narratives, Amplifying Voices, and Questioning Organic Visibility

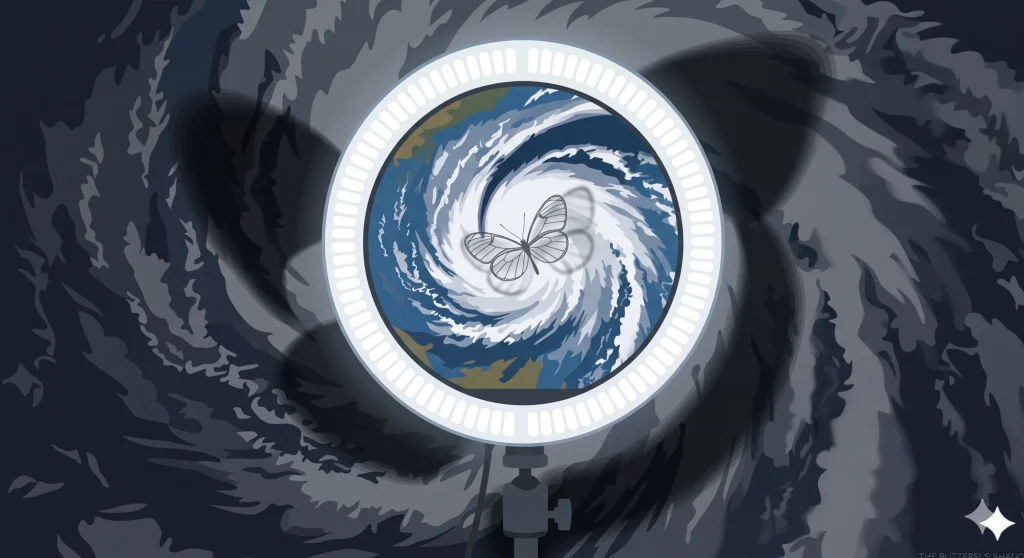

In the overflowing digital markets of Pakistan, where a young designer in Lahore captures a vlog of a street-food stand in Urdu or an activist in Quetta records the state of the environment, success is not only dependent on the invention or problem-solving qualities of the content, but also on the invisible hand of algorithms. Online platforms such as YouTube, TikTok, Facebook, Instagram, and X (previously Twitter) claim to provide equal opportunity: the premise that anyone with a smartphone and a good story can reach out to millions. Yet, beneath this democratic facade is a complex system of recommendation engines, content ranking systems, and automated moderation tools that quietly shape what Pakistanis watch, post, and eventually perceive.

As we manoeuvre through the intricacies of 2026, it is becoming evident that these digital platforms do not just host Pakistani content; rather, they architect its appearance. This essay evaluates the algorithmic bias of the Pakistani digital context and considers how these infrastructures construct and reinforce national discourse, give greater prominence to some voices over others, and hold the myth of organic online visibility to fundamental doubt.

The invisible bias mechanism

Algorithmic bias does not always represent a deliberate decision to censor. Rather, it is the product of machine-learning algorithms, trained on large and biased sets of data, that reproduce human biases, business interests, and the constraints of technology. Social networking platforms are optimised towards ‘engagement’, which comprises likes, comments, shares, and time watched. This creates a vicious cycle that fuels sensationalism instead of substance. Such partiality is reinforced in the context of Pakistan, where there is a serious lack of connection between global technology centres and the realities on the ground.

Machine-learning models are mostly trained in English-speaking environments. When these systems are implemented in the Pakistani market, they are frequently deficient in the more subtle training data needed to comprehend the specificities of Urdu, Pashto, Sindhi, or regional accents. This language barrier leads to a system of contextual misunderstanding. A critical questioning of the government or a report on human rights might be treated by automated systems as incendiary or threatening, whereas outrage-driven content, which generates high traffic, is featured in the first position of the trending tabs.

The growing technological caste system

This phenomenon is observed nowhere better than in the increasing regional and linguistic gaps of the Pakistani internet. New research on Pakistani journalism has started discussing the introduction of a ‘technological caste system’ fuelled by platform prioritisation (Irfan et al., 2026). It generates a virtual pecking order in which English-language or city-centre-focused stories from giant hubs like Lahore, Karachi, and Islamabad are pushed to the front of the feeds. Meanwhile, grassroots stories from smaller provinces face algorithmic oxygen deprivation.

When the majority or the urban elite use algorithms to boost content that reinforces their beliefs, regional and rural journalism is effectively relegated to a ‘no-man’s-land’. The effects of this digital divide are news inequality, as local newsrooms have their editorial independence destroyed by being forced to increasingly depend on global platforms with uncertain ‘black-box’ methods of distribution. When activism is narrated using a regional language or focuses on a niche provincial concern, the algorithm tends to dismiss it as insignificant to the broader populace, shutting out the very diversity that glues the Pakistani federation together.

Echo chambers and political polarisation

The greatest harm caused by algorithmic bias, in the view of political science, is the deepening of ‘filter bubbles’. Algorithms are set in a way that holds the user on the platform as long as possible by pushing content that reinforces their already-held beliefs. This form of personalisation has increased tribal loyalty and polarisation in the already charged political scenario in Pakistan.

The recommendation engine favours content that arouses high emotional responses, be it anger, fear, or a sense of grievance. This implies that the moderates, or anyone interested in consensus, are usually drowned out, while polarising stories are given much publicity. Opinions expressed by minorities that are not aligned with the powerful are often subject to ‘shadow-banning’ , a phenomenon where their visibility is limited without any warning. In the legal void of the Pakistani online ecosystem, there is not much that individuals can do to challenge the hindrance of their online presence through an unclear set of rules that they can neither view nor dispute.

The gendered aspect of patriarchal indignation

Pakistan is one of the countries where algorithmic bias has particularly harsh gendered dimensions. Algorithms that support engagement have unintentionally stimulated patriarchal outrage in the digital arena. Videos involving the weaponised ‘moral policing’ of women influencers or female public personalities frequently result in bountiful ‘outrage-clicks’ and vitriol. This high engagement is seen by the algorithm as an indicator that the content should be boosted, forming a vicious cycle where misogynistic content spreads rapidly.

On the other hand, feminist or progressive stories tend to be repressed or flagged under community guidelines that cannot figure out the difference between empowering discourse and the ‘incendiary’ discourse the bots are programmed to shun. Creators and activists, particularly women, testify that they must constantly struggle against the algorithm to make their voices heard. In many cases, they have to sacrifice parts of their message to meet the patterns required by platform engagement metrics. This is a type of online bullying enabled not just by individuals, but through the very structure of the platforms themselves.

The myth of organic visibility

The main issue persists: Is online visibility truly organic? The testimony points to an overwhelming ‘no’. What seems like ‘trending’ or ‘For You’ is often an artificial result that gives preference to profit over pluralism. Proprietary algorithms put a premium on the volume of engagement as opposed to the quality of discourse. This has perpetuated disinformation ecosystems in Pakistan, with low-context and high-emotion content able to spread more quickly than confirmed facts.

The social implication is extensive. Algorithmic bias implicates the status quo where those already in power are guaranteed to remain heard the urban, the English-speaking, and the politically dominant. This stifles the creative sectors and harms democratic deliberation. The curation of our digital reality by an invisible hand has a debilitating effect on the capacity of the populace to have meaningful, wise discussions.

The need for transparency and the legal vacuum

As a legal professional, the most pressing issue is the fact that these systems are not accountable. The legal system of Pakistan, such as the Prevention of Electronic Crimes Act (PECA) 2016, is focused on the policing of individual speech but practically lacks any form of regulation regarding the algorithms that constitute such speech. Algorithmic transparency does not exist at all.

A change in policy is required, where recommendation systems are independently audited and the criteria used for ranking are disclosed. Moreover, it urgently requires investment in AI models that are trained locally and are conscious of the linguistic and cultural peculiarities of Pakistan. Using Silicon Valley logic to censor Pakistani content is, in effect, abandoning our digital sovereignty.

Reclaiming the digital public square

Digital platforms are not mere reflections of Pakistani trends; they are their producers. Addressing this bias needs to move beyond technical solutions and towards a general demand for rigour, transparency, and local agency. Pakistan needs to promote a hybrid model of gatekeeping — one that incorporates human editorial responsibility and ethical oversight with the responsibility and transparency of algorithms.

We should strive to turn our online environment from a place of suppression into an actual marketplace of ideas. We should insist on systems in which all voices are heard be it the sound of a street-food vlog in Lahore or a report on the environment in Quetta so they have a truly just and transparent opportunity to be heard. It is not just about whether visibility can become organic, but whether we, as a society, are willing to insist on the digital rights and legal frameworks that would enable it to do so. It is only at that point that the real colour of Pakistani stories can shine through the internet as it does within the minds and hearts of its people.